FlickerFix: Flicker Removal for LED Traffic Lights in Dashcam Videos

Copyright © 2025 Korean Institute of Broadcast and Media Engineers. All rights reserved.

“This is an Open-Access article distributed under the terms of the Creative Commons BY-NC-ND (http://creativecommons.org/licenses/by-nc-nd/3.0) which permits unrestricted non-commercial use, distribution, and reproduction in any medium, provided the original work is properly cited and not altered.”

Abstract

Many LED traffic lights control brightness with pulse-width modulation (PWM). When the frame rate of a dashcam does not match the PWM frequency or even when there is a small mismatch, flicker artifacts appear, making the signal look as if it blinks or disappears. Instead of relying on hardware-integrated solutions, we propose a three-stage post-processing method for flicker removal. First, we fine-tune a YOLO detector on domestic traffic light datasets. Second, a segmentation model trained on our synthesized signal-pseudo mask dataset is used to extract the active light region. Finally, corrupted signals are restored through reference-mask based synthesis. We also constructed two datasets: a large-scale ROI segmentation dataset with pseudo masks, and a synthetic flicker video dataset generated by simulating PWM-camera mismatch. We evaluate the method on 85 pairs of synthetic flicker videos, achieving improvements of +3.6dB in PSNR, +0.30 in SSIM, +0.19 in FDI. We also validate on real dashcam videos, showing consistent qualitative improvements. Because the approach is sensor-agnostic, it can be applied to existing dashcam footage and can support more reliable traffic-light recognition in future ADAS and autonomous driving systems.

Keywords:

Traffic Light, Flicker, Video Processing, Ego-camera VideosI. Introduction

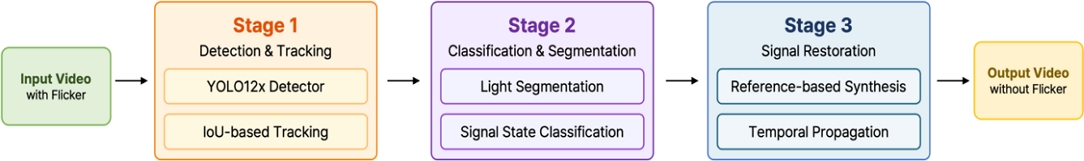

Modern LED traffic lights use PWM, which can cause flicker in dashcam videos when camera frame rates do not match (Figure 1). Prior studies mostly focused on hardware solution [1], while software-based flicker removal was mainly frame-level [2] rather than ROI-specific. As shown in Figure 2, our pipeline directly addresses flickers at the ROI level using a software-only approach. Our pipeline is related to recent work on frame-level video deflickering [2]. While the recent work mitigates the flicker across the entire frame, our proposed FlickerFix targets flicker restoration within LED traffic-light ROIs. Our contribution goes beyond proposing a removal pipeline, as we also constructed dedicated datasets to enable evaluations using established metrics such as PSNR, SSIM and FDI [3].

Ⅱ. Proposed Method

1. Three Stages Pipeline

We use a YOLO12x detector [4], fine-tuned on a big traffic light dataset [5][6]. Only horizontal signals are used, since this fits Korean road scenes. The bounding boxes are made stable across frames with IoU-based temporal tracking.

Next, we train a UNet segmentation model with an extra region head on our dataset. This model can predict the active light area directly even though strong flicker happens.

The last stage tries to restore missing or broken lights. If a stable ROI is available, we use it as reference. If not, the brightness is guessed from nearby frames or taken from the previous one. This helps keep the signal stable in time without adding new artifacts.

2. Synthetic Datasets Generation

For testing and training, we build two datasets. One is a segmentation dataset of traffic lights. The other is a flicker dataset where we simulate PWM–camera mismatch with different settings.

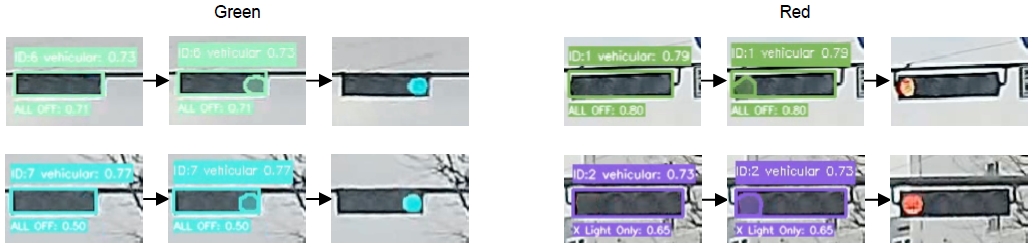

We first find traffic light areas with object detection. The bounding boxes are then cleaned into pixel-level masks by simple color threshold and morphological filtering. This produces a large paired dataset of 536,028 cropped ROI images in JPEG with corresponding multi-attribute labels(JSON) and pseudo segmentation masks(PNG), as illustrated in Figure 3.

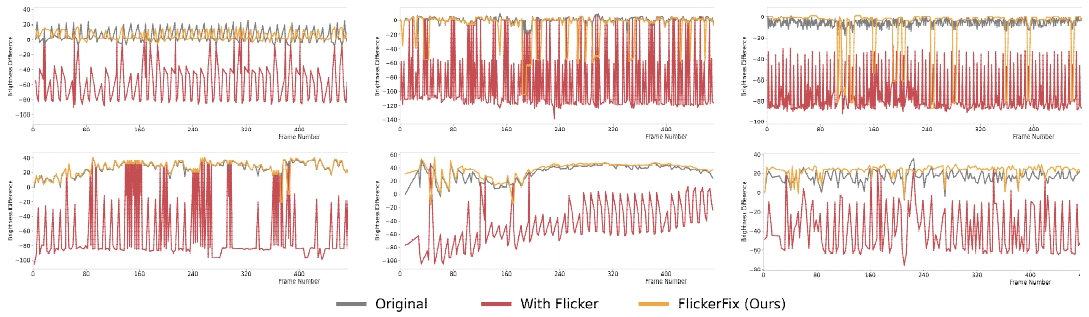

To test robustness against flicker, we generate 85 real dashcam videos with synthesized flicker using our proposed Algorithm 1, which modulates the brightness of the traffic-light region under varying signal and camera parameters. The flicker synthesis was performed with PWM frequencies of 120 Hz, 150 Hz, and 180 Hz, a camera frame rate of 27.5 fps, exposure times of 2ms and 5ms, and duty cycles of 0.3, 0.5, 0.7. Each sequence includes a paired original and flickered version. Figure 4 shows the results of the flicker synthesis using Algorithm 1.

Ⅲ. Experimental Result

1. Qualitative Results

We test our pipeline on real dashcam videos with Figure 5 showing the process and results of our method, and Figure 6 presenting the comparison of temporal brightness consistency.

2. Quantitative Results

In this work, we check the restoration performance with three metrics (PSNR, SSIM, and FDI). FDI (Flicker Detection Index) from the IEEE P2020 [3] measures whether a flickering light remains visible against the background, computed from ROI brightness contrast with a threshold τ = 0.10. The FDI is computed as:

| (1) |

We focused on the detailed mask-level in ROI evaluation results mainly for the 27.5fps case. In Table 1, our method increased 3.62 of PSNR, 0.3028 of SSIM and 0.1919 of FDI.

Ⅳ. Conclusion

We presented FlickerFix, a software-based pipeline that detects and removes flicker artifacts in dashcam videos of LED traffic lights. Beyond the removal method itself, we contributed two dedicated datasets that enable reproducible training and evaluating. Experiments on 85 synthetic sequences and real dashcam footage demonstrate clear improvements in both perceptual quality and visibility stability. Unlike hardware-oriented solutions, our approach is fully sensor-agnostic and can be applied to existing recordings. Future work will address challenges in detecting very small or distant signals and extend the synthetic dataset generation to support more comprehensive and fair evaluation protocols.

Acknowledgments

This work was supported by Institute of Information & communications Technology Planning & Evaluation (IITP) grant funded by the Korea government (MSIT) (No.RS-2022-00155915, Artificial Intelligence Convergence Innovation Human Resources Development (Inha University)). This work was supported by Institute of Information & communications Technology Planning & Evaluation (IITP) under the Leading Generative AI Human Resources Development (IITP-2025-RS-2024-00360227) grant funded by the Korea government(MSIT).

References

- LED Flicker Mitigation Technology for Automotive Use, https://www.sony-semicon.com/en/technology/automotive/lfm.html

-

Jiang, Y., Xue, T., Brown, M., and Huang, J., “Blind video deflickering by neural filtering with a flawed atlas,” Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition, June 2023.

[https://doi.org/10.1109/CVPR52729.2023.01006]

-

Deegan, B., “A review of IEEE P2020 flicker metrics,” in Proc. IS&T Int’l. Symp. On Electronic Imaging: Autonomous Vehicles and Machines, vol. 34, pp 108-1-108-6, January 2022.

[https://doi.org/10.2352/EI.2022.34.16.AVM-108]

-

Tian, Y., Ye, Q., Doermann, D., “YOLOv12: Attention-centric real-time object detectors,” arXiv preprint arXiv:2502.12524, February, 2025.

[https://doi.org/10.48550/arXiv.2502.12524]

- AI Dataset for Traffic Light and Traffic Sign Detection in Seoul Capital Area, https://aihub.or.kr/aihubdata/data/view.do?currMenu=115&topMenu=100&dataSetSn=188, (accessed Sep. 2021).

- Traffic Light Signal Information Recognition Image Data in Various Driving Environments of Autonomous Vehicles, https://aihub.or.kr/aihubdata/data/view.do?currMenu=115&topMenu=100&dataSetSn=71579, (accessed Dec. 2023).