Enhancing Lossless Compression in AI-PCC via Distribution-Aware Feature Extraction

Copyright © 2025 Korean Institute of Broadcast and Media Engineers. All rights reserved.

“This is an Open-Access article distributed under the terms of the Creative Commons BY-NC-ND (http://creativecommons.org/licenses/by-nc-nd/3.0) which permits unrestricted non-commercial use, distribution, and reproduction in any medium, provided the original work is properly cited and not altered.”

Abstract

The Moving Picture Experts Group (MPEG) is currently standardizing AI-based Point Cloud Compression (AI-PCC). Since AI-PCC reconstructs point clouds using geometric features extracted from the input, the quality of reconstruction improves as these extracted features become more precise. However, although point clouds exhibit variations in their geometric distributions, the current AI-PCC framework extracts features using identical cubic receptive fields. To address this limitation, this paper proposes a distribution-aware feature extraction method that uses an orthotropic rect-kernel extractor/aggregator for dense point clouds to capture linear and planar characteristics, and a sparse dilated convolution extractor for sparse point clouds to reflect point dispersion. The proposed method enhances lossless compression performance in AI-PCC by performing adaptive feature extraction to the structural heterogeneity of point clouds.

Keywords:

3D Point Cloud, Geometry Coding, AI-PCC, Feature Extraction, Lossless CodingⅠ. Introduction

Point clouds represent real-world objects or scenes as a set of points in a three-dimensional coordinate system, making them an emerging next-generation 3D content format capable of capturing highly precise spatial information[1][2][3][4]. Each point contains geometric information such as its position (x, y, z) or (r, θ, ϕ) along with attribute information such as color or reflectance. However, compared to the conventional 2D image content, point clouds require tens of times more data, making compression technologies essential for efficient storage and transmission.

Accordingly, the Moving Picture Experts Group (MPEG) Working Group 7 (3D Graphics and Haptics, 3DGH) has been standardizing point cloud compression technologies since 2017. Representative standards include Video-based Point Cloud Compression (V-PCC)[5][6] and Geometry-based Point Cloud Compression (G-PCC)[7]. These standards are primarily signal processing-based approaches and achieve a balance between compression efficiency and reconstruction quality by exploiting the structural and spatial correlations inherent in point clouds.

Recently, with the advancement of artificial intelligence–based compression techniques, extensive research has been made to overcome the limitations of traditional signal processing–based compression method[8][9][10][11][12]. Accordingly, at the 148th MPEG meeting held in November 2024, the standardization of AI-based Point Cloud Compression (AI-PCC) was officially launched under the AI-based 3D Graphics Coding (AI-GC) group[13][14][15].

AI-PCC performs decoding process based on geometric features extracted from the input point cloud, and thus, the precision and expressiveness of the extracted features play a crucial role in determining the overall coding performance. However, the current AI-PCC feature extraction framework still applies convolution operations using uniform cubic receptive fields[16], without considering the variations in geometric distributions not only across different point cloud contents but also within a single point cloud. This unified design limits the network’s ability to effectively capture the directional and anisotropic characteristics of 3D structures. To address this limitation, this paper proposes a distribution-based feature extraction method that consists of an orthotropic rect-kernel extractor/aggregator for dense point clouds to capture linear and planar features, and a sparse dilated extractor for sparse point clouds to represent dispersed structures.

Ⅱ. Background

Before describing the proposed technique, this section introduces the overall geometry compression methods of the current standard AI-PCC[17], TMAP (Test Model for AI-PCC)[18], to provide a better understanding of the proposed approach.

1. TMAP

TMAP fundamentally utilizes a hierarchical coding structure, starting from the bit-depth of the input point cloud and progressively performing down-sampling, followed by encoding at each down-sampled layer. The lossless compression method applied to each layer is referred to as Octree-based Coding, whereas the lossy compression method is known as Feature-based Coding, which is beyond the scope of this paper and is described in detail in [18].

When Octree-based Coding is applied to all layers, the entire input point cloud is encoded in a lossless manner. In contrast, lossy compression is achieved by applying Feature-based Coding from the input bit-depth down to a specific bit-depth, while Octree-based Coding is used for the remaining layers.

2. Overview of Octree-based Coding

The Octree-based Coding method extracts features according to the bit-depth of the input point cloud, estimates the probability of occupancy information based on these extracted features, and then performs entropy coding within a hierarchical structure.

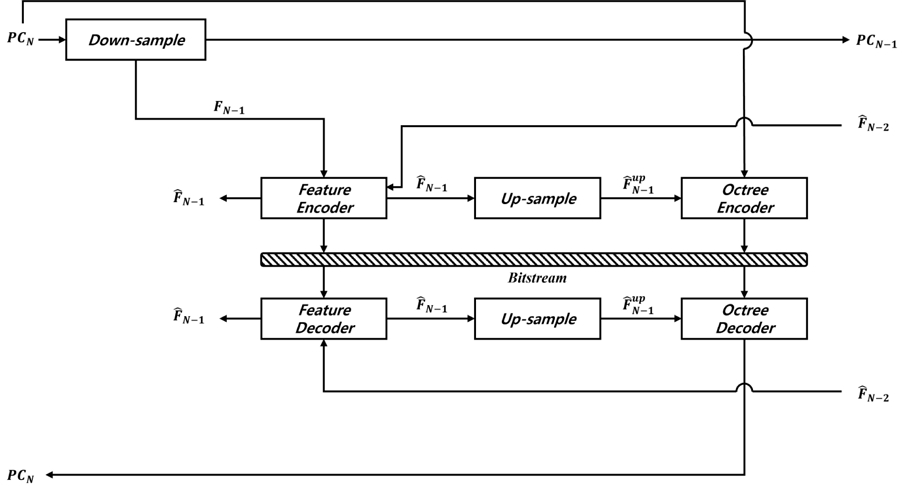

Figure 1 illustrates the Octree-based Coding structure of TMAP at an arbitrary bit-depth. The Down-sample, Up-sample, Feature Encoder, and Octree Encoder stages are performed in the encoder, while the Feature Decoder, Octree Decoder, and Up-Sample stages are conducted in the decoder.

Starting with the encoding process, the 3D input coordinates at bit-depth N (PCN) pass through the Down-sample module, which spatially down-samples the point cloud to PCN-1 while, in tandem, extracting representative features FN-1 via sparse convolutions[19][20] aligned to PCN-1. Next, the Feature Encoder leverages the lower-bit-depth features , aligned with PCN-2, to condition a Hyperprior Synthesis Transform that estimates the distribution of the current features FN-1. The estimated probability distribution is then used for entropy encoding of quantized current features . Then, the reconstructed features are up-sampled to the resolution of PCN, yielding . The Octree Encoder uses to predict occupancy probabilities for points in PCN and performs arithmetic encoding conditioned on these probabilities.

In the decoding process, the Feature Decoder decodes the entropy-coded features using through the same Hyperprior Synthesis Transform used in the encoder. The reconstructed features are then input to the Up-sample process to yield . Finally, is fed into the Octree Decoder, which estimates occupancy probabilities using the same network architecture as the encoder and reconstructs PCN via arithmetic decoding conditioned on the predicted probabilities.

Ⅲ. Proposed Method

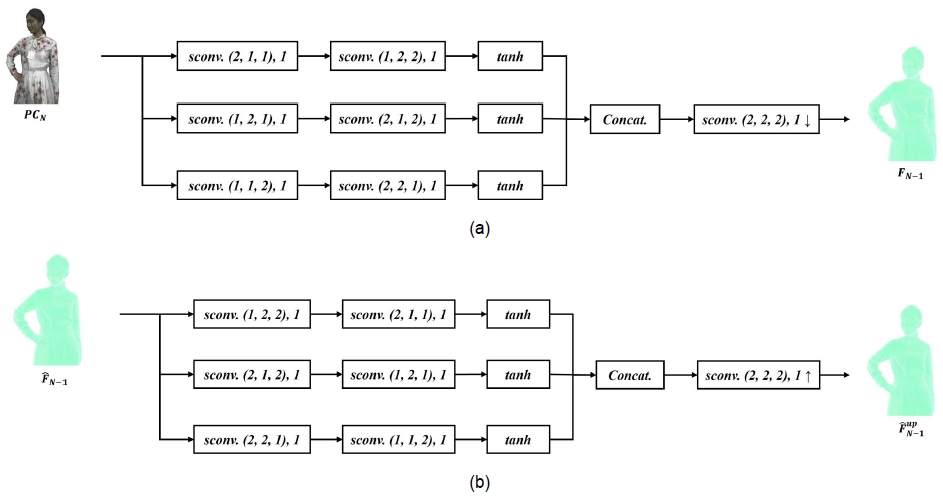

In this section, we present the proposed feature extraction/aggregation modules. These modules replace the Down-sample and Up-sample stage in Figure 1 by performing spatial down/up-sampling and generating representative feature tailored to the distributional characteristics of dense and sparse point clouds, respectively. To facilitate understanding of the proposed network diagrams, we first define the terminology and notation used in architecture. “Sconv.” denotes a network block that performs sparse convolution. The tuple “(a, b, c)” indicates the kernel size, and the following scalar “d” denotes the dilation parameter. An optional arrow “↑” or “↓” defines up-sampling or down-sampling with stride 2, respectively. “Tanh” is shorthand for the hyperbolic tangent activation function. “Concat.” refers to concatenation; in this work, independently extracted features are appended rather than summed, allowing subsequent layers to learn how to fuse them.

For dense point clouds, where local point density is relatively uniform and neighboring points often form planar or linear structures, we propose an orthotropic rect-kernel extractor and aggregator. These extractor and aggregator apply direction-wise rectangular kernels aligned with planar and linear orientations, aggregate the corresponding features, and fuse them into a unified feature representation. The detailed architecture is illustrated in Figure 2.

In the first stage, each branch applies axis-aligned, elongated kernels along the x-, y-, or z- axis to capture linear structures, as in Equation (1).

| (1) |

Here, XN is the input sparse tensor constructed from PCN: its coordinates follow PCN, and its features are initialized to ones. In , denotes axis-aligned rectangular kernels elongated along the subscripted axis, with , , and ; d is the dilation parameter and s is the stride. The outputs Lx, Ly, and Lz are the line-oriented features extracted along the x-, y-, or z- axis for each , respectively.

In the second stage, plane-oriented kernels aligned with the yz, xz, and xy planes transform these into planar responses, as in Equation (2).

| (2) |

Here, Pyz, Pxz, and Pxy are plane-oriented responses for the yz-, xz-, and xy- planes provided from each , and are rectangular kernels spanning the indicated plane, with , , and .

Finally, the branch outputs are passed through activation function, concatenated in a channel-append manner, and fused by a s = 2 down-sampling convolution to produce FN-1, as in Equation (3).

| (3) |

Here, denotes coordinate-wise concatenation, and is a mixing kernel that blends the concatenated features Z within a local 2×2×2 neighborhood, producing a down-sampled, fused representation.

The next module is the orthotropic rect-kernel aggregator as shown in Figure 2 (b), which replaces the Up-sample block in Figure 1. It takes as input and outputs . Similar to the orthotropic rect-kernel extractor, the flow is split into three branches, each with two convolutional stages. Unlike the extractor, the order is reversed: the first stage uses plane-oriented rectangular kernels aligned with the yz, xz, and xy planes to aggregate planar features; the second stage applies axis-aligned elongated kernels along the x-, y-, and z- axes to refine linear structures. Branch outputs pass through the activation function, are appended rather than summed, and are finally up-sampled via a stride 2 convolution to produce .

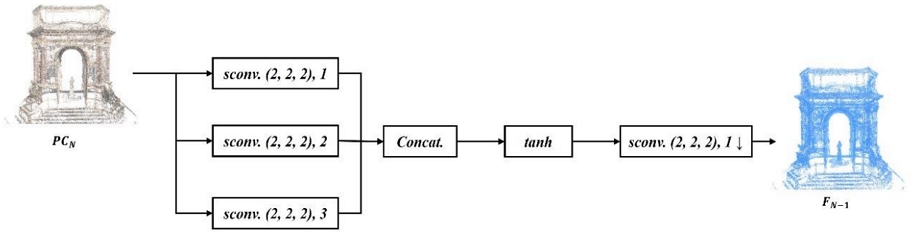

Next, we introduce the proposed sparse dilated extractor for improving feature extraction on sparse point clouds, as illustrated in Figure 3. This module targets point cloud with highly non-uniform, irregular spatial distributions by employing dilated sparse convolutions whose kernels are spatially dispersed. By enlarging the receptive field without increasing the kernel mass, the extractor captures long-range, low-density structures and aggregates features over disconnected neighborhoods typical of sparse geometry. Note that we do not propose a new aggregator for sparse point cloud since Up-sample in Figure 1 is not used in the current TMAP pipeline for sparse-point inference. Instead, a dedicated MLP-based feature aggregation stage is applied after feature extraction[21].

As shown in Figure 3, this module shares the same input–output interface as Figure 2 (a), but the extractor design differs. The sparse dilated extractor captures irregular sparsity by probing multi-radius neighborhoods via dilation. Throughout this extractor, the convolutional layers use a fixed kernel with dilation rates for each branch, as in Equation (4).

| (4) |

The features from each branch are concatenated (channel-append at identical coordinates) to form Zsparse, passed through hyper tangent activation function, and fused by a stride 2 convolution to produce FN-1, as in Equation (5).

| (5) |

Ⅳ. Experimental Results

This section presents the performance evaluation and analysis of the proposed method. Before detailing the results, we briefly summarize the information used for model training and inference.

1. Experimental Setup

The experiments follow the Common Test Conditions (CTC)[22] defined by the MPEG AI-GC group, which specify four dataset categories: RWTT, Static, Dynamic, and LiDAR. Since we focus on static scenes, we exclude Dynamic and LiDAR categories, and use RWTT and Static categories for both training and testing. RWTT[23] consists of dense point clouds obtained by sampling textured 3D mesh models represented by vertices, edges, and textures, while Static[24] consists of sparse, voxelized point-cloud frames that represent surfaces. Finally, the training and testing datasets within each category follow the same categorization defined in the CTC.

For RWTT content, we performed 50 epochs of base training using Adam (β1 = 0.9, β2 = 0.999, weight decay=0) with an initial learning rate of 5×10-4, followed by 20 epochs of fine-tuning; a step-decay schedule halved the learning rate every 10 epochs. For Static content, we used the same optimizer and initial learning rate for 50 epochs of training with the learning rate likewise halved every 10 epochs. These procedures follow the CTC training methodology, which is recommended but not mandated, and, for fairness, we kept the training method consistent with the CTC models to ensure comparable model construction and evaluation. We trained on a single NVIDIA RTX A6000 (48 GB GDDR6, PCIe) paired with an AMD EPYCTM 7702P (64 cores @ 2.00 GHz) using PyTorch 2.4.1 with CUDA 11.8, with sparse-convolution operations implemented via MinkowskiEngine 0.5.4.

For inference, we used a single NVIDIA RTX 3090 Ti (24 GB GDDR6X, PCIe) with a 13th-Gen Intel CoreTM i9-13900K (24 cores @ 3.00 GHz), running PyTorch 2.0.0 with CUDA 11.8 and MinkowskiEngine 0.5.4, and otherwise matching the training setup. All codec parameters followed the CTC test conditions for lossless geometry coding, and TMAP v3.1, the latest official AI-PCC test model, served as the anchor.

Lossless compression performance is assessed using the bits-per-input-point (bpip) ratio, defined as the bitstream size per input point of the proposed method divided by that of the anchor and reported as a percentage.

2. Compression performance of the proposed methods

After integrating the proposed method into TMAP v3.1, the performance of the orthotropic rect-kernel extractor and aggregator on RWTT content is summarized in Table 1.

Lossless compression performance of the orthotropic rect-kernel extractor and aggregator compared to TMAP v3.1

As shown in Table 1, the proposed method reduced the bitstream size for all three test sequences; RWTT 156 Vishnu vox10 achieved the largest reduction of 2.9% bpip ratio, while RWTT 059 Tomb vox10 showed the smallest reduction of 200 bits. Although this value is too small to be visibly reflected in the table, it corresponds to approximately 0.02% bpip ratio reduction when converted.

In terms of runtime overhead, which is presented in Table 2, we observed average increases of 1.6% on the encoder and 9.2% on the decoder. The increase in complexity is likely due to the additional GPU computations introduced by the proposed design. Unlike the previous approach, which used a single cubic kernel, the proposed method employs three separate branches, each with specially designed kernels to extract features along linear and planar directions, resulting in extra computational overhead on the GPU.

Similarly, Table 3 presents the performance of the proposed sparse dilated extractor, with TMAP v3.1 serving as the anchor, and the dilation rates in Equation (4) were set to d1 = 1, d2 = 2, and d3 = 3.

As shown in Table 3, all Static sequences exhibited bitstream reductions, with an average bpip ratio decrease of 1.9%. The largest reduction was observed for Facade 00009 vox12 at about 3.2%, while Shiva 00035 vox12 showed the smallest reduction at about 1.3%.

Similar to the RWTT results, the time complexity shown in Table 4 increased by approximately 2.6% for the encoder and 9.5% for the decoder on average. This increase is attributed to the same reason described in Table 2. In the proposed method, three separate branches are used, and each branch applies convolution operations with different dilation rates, which introduces additional computations.

In summary, by adapting kernel design to the distribution characteristics of dense and sparse point clouds, the proposed methods enable distribution-aware feature extraction, produce more informative features, improve the precision of occupancy probability estimation, and consequently reduce the bits per input point.

3. Analysis on compression performance of proposed methods

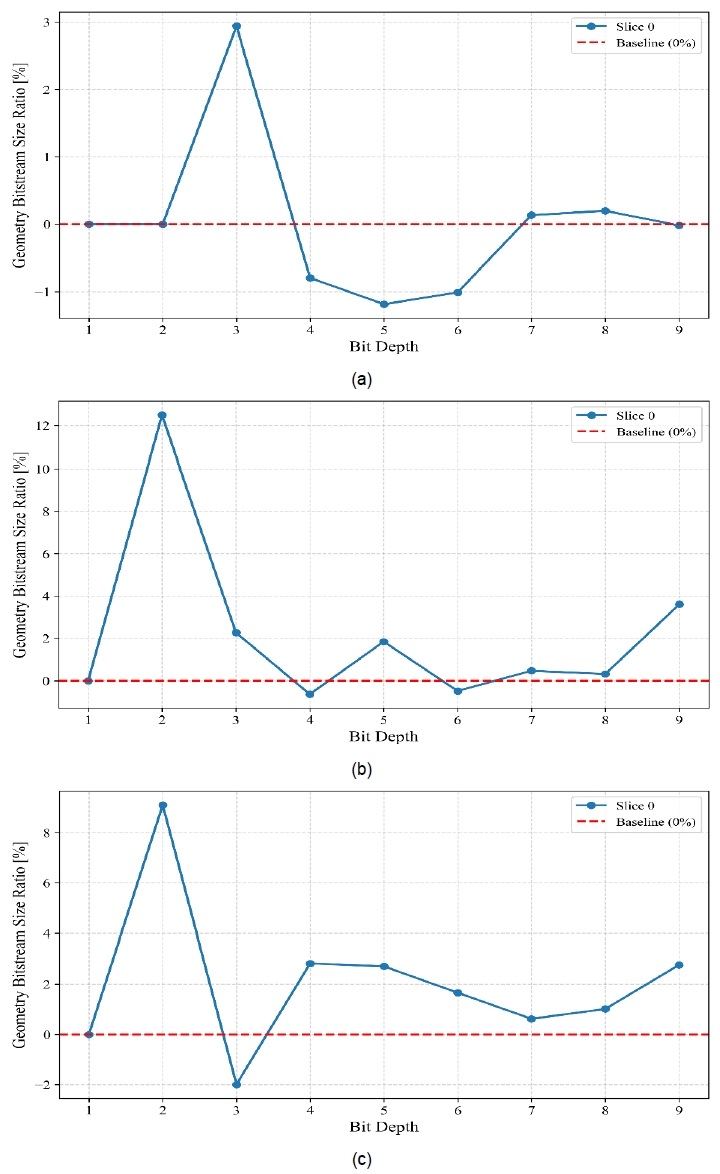

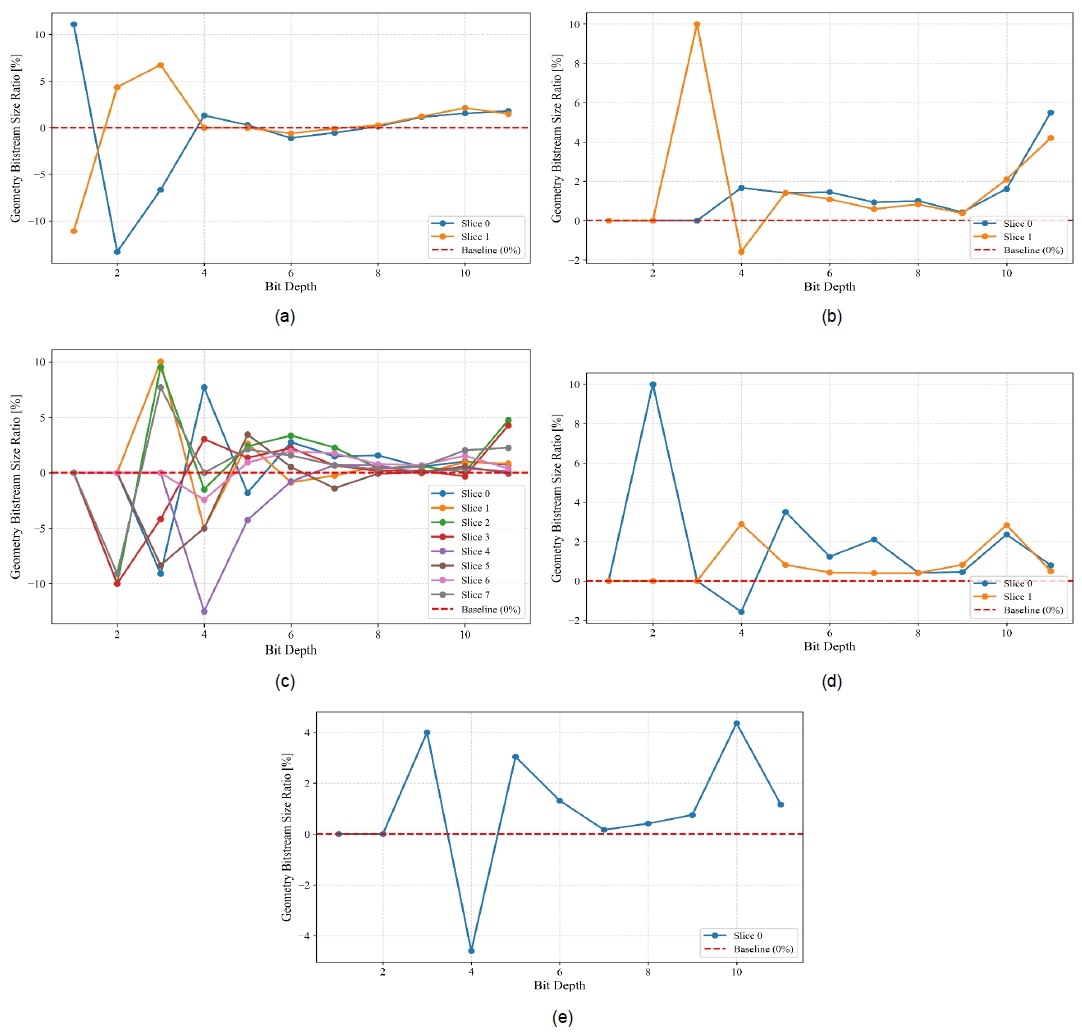

As described in Section Ⅱ-1, TMAP performs hierarchical coding according to the bit-depth of input point cloud. Therefore, to conduct a more detailed analysis of the proposed method’s performance, the efficiency of the Octree-based Coding was examined at each hierarchical level. The performance analysis of orthotropic rect-kernel extractor and aggregator for the RWTT content is shown in Figure 4, while that of sparse dilated extractor for Static content is presented in Figure 5. In Figures 4 and 5, the y-axis represents the Geometry Bitstream Size Ratio (%), which indicates the percentage change in bitstream size at each level compared with the anchor. A positive value denotes an improvement achieved by the proposed method, whereas a negative value indicates a performance loss. For clearer visualization of the results, a red baseline was placed at the point where the ratio equals zero. In addition, as indicated in the legends of each figure, the curves are labeled in the form of slice with number, which represents the slice_id used to distinguish multiple subdivided slices within the tested point cloud sequence.

Performance of the orthotropic rect-kernel extractor and aggregator across bit-depths for RWTT contents: (a) RWTT 059 Tomb vox10, (b) RWTT 156 Vishnu vox10, and (c) RWTT 211 Foxstatue vox10

Performance of the sparse dilated extractor across bit-depths for Static contents: (a) Arco Valentino Dense vox12, (b) Facade 00009 vox12, (c) House Without Roof 00057 vox12, (d) Shiva 00035 vox12, and (e) Staue Klimt vox12

As shown in Figure 4, the performance trends for the RWTT test sequences exhibit highly consistent patterns across different bit-depths. While noticeable gains are observed at very low bit-depths, the mid-level layers show irregular variations in performance depending on the content. However, as the bit-depth increases, the results tend to converge toward regions of improvement. Although several mid-level layers experience local performance losses, the overall compression efficiency is primarily influenced by the highest bit-depth layers, which contain the largest data volume. Consequently, an average compression gain can be observed in the overall performance evaluation.

Figure 5 presents the performance of the proposed method for Static contents across different bit-depths. As shown in Figure 5, irregular gains and losses are observed at the lower and middle bit-depth layers for each content, and no consistent trend can be identified. In some cases, even different slices within the same content exhibit opposite characteristics such as Slice 0 and Slice 1 in Figure 5 (a), or Slice 1 and Slice 3 in Figure 5 (c). However, across all contents and slices, the results tend to either maintain or achieve performance gains at higher bit-depths, ultimately leading to an overall compression improvement.

From the analyses of Figures 4 and 5, it can be concluded that both proposed structures achieve compression gains primarily because their inference capability is more effective at higher bit-depths, where the spatial resolution is higher. Conversely, the performance variation across lower and intermediate layers appears to be less consistent. This indicates that the proposed methods are particularly effective in estimating occupancy probabilities for geometrically complex regions at high resolutions.

Ⅴ. Conclusion

In this paper, we proposed two types of feature processing modules to enhance the feature representation and generation capability of the current AI-PCC standard codec, TMAP. For dense point cloud compression, we designed the orthotropic rect-kernel extractor and aggregator that learn directional features along linear and planar orientations. We also introduced a sparse dilated extractor that captures irregular spatial sparsity for the sparse point cloud. The proposed methods were developed to improve occupancy probability prediction, thereby achieving gains in lossless compression efficiency. As confirmed in Section IV, the proposed techniques do not exhibit uniform performance across all bit-depths; rather, the most significant gains are consistently observed at higher bit-depth regions, where the geometric structure becomes more complex. This implies that the proposed architectures are particularly effective at extracting and utilizing features in geometrically intricate regions with high-resolution. As a future direction, we plan to refine the framework to robustly exploit feature information even in lower bit-depth layers, thereby ensuring consistent performance across all hierarchical levels and further improving the overall compression efficiency.

Acknowledgments

This work was supported by an internal grant from the Electronics and Telecommunications Research Institute (ETRI) [24BC2100, Development of High-Quality, Ultra-Fast Rendering and Compression Technologies Based on Neural Radiance Field Representation] and the Ministry of Science and ICT (MSIT), Korea, under the Information Technology Research Center (ITRC) support program (IITP-2025-RS-2021-II212046) supervised by the Institute for Information & Communications Technology Planning & Evaluation (IITP).

References

-

C.-Y. Tsai and S.-H. Tsai, “Simultaneous 3D object recognition and pose estimation based on RGB-D images,” IEEE Access, Vol. 6, pp. 28859–28869, Feb. 2018.

[https://doi.org/10.1109/ACCESS.2018.2808225]

-

J. Zhou, H. Xu, Z. Ma, Y. Meng, and D. Hui, “Sparse point cloud generation based on turntable 2D lidar and point cloud assembly in augmented reality environment,” in Proc. IEEE Int. Instrum. Meas. Technol. Conf. (I2MTC), Glasgow, United Kingdom, pp. 1–6, 2021.

[https://doi.org/10.1109/I2MTC50364.2021.9459981]

-

K. Lee, J. Yi, Y. Lee, S. Choi, and Y. M. Kim, “GROOT: A real-time streaming system of high-fidelity volumetric videos,” in Proc. Int. Conf. Mobile Comput. Netw., New York, USA, pp. 1–14, 2020.

[https://doi.org/10.1145/3372224.3419214]

-

S. Jia, X. Gong, F. Liu, and L. Ma, “AI-powered LiDAR point cloud understanding and processing: An updated survey,” IEEE Trans. Intell. Transport. Syst., Vol. 26, No. 8, pp. 11249-11275, Aug. 2025.

[https://doi.org/10.1109/TITS.2025.3568500]

-

S. Rhyu, J. Kim, J. Im, and K. Kim, “Contextual homogeneity-based patch decomposition method for higher point cloud compression,” IEEE Access, Vol. 8, pp. 207805–207812, Nov. 2020.

[https://doi.org/10.1109/ACCESS.2020.3038800]

-

S. Schwarz, M. Preda, V. Baroncini, M. Budagavi, P. Cesar, and P. A. Chou, et al., “Emerging MPEG standards for point cloud compression,” IEEE J. Emerg. Sel. Top. Circuits Syst., Vol. 9, No. 1, pp. 133–148, Mar. 2019.

[https://doi.org/10.1109/JETCAS.2018.2885981]

-

S. Rhyu, J. Kim, G. H. Park, and K. Kim, “Low‐complexity patch projection method for efficient and lightweight point‐cloud compression,” ETRI J., Vol. 46, No. 4, pp. 683–696, Aug. 2024.

[https://doi.org/10.4218/etrij.2023-0242]

-

J. Pang, M. A. Lodhi, and D. Tian, “GRASP-Net: Geometric residual analysis and synthesis for point cloud compression,” Proc. 1st Int. Workshop on Advances in Point Cloud Compression, Processing and Analysis (APCCPA ’22), New York, USA, pp. 11–19, Oct. 2022.

[https://doi.org/10.1145/3552457.3555727]

-

J. Wang, D. Ding, Z. Li, X. Feng, C. Cao, and Z. Ma, “Sparse tensor-based multiscale representation for point cloud geometry compression,” IEEE Trans. Pattern Anal. Mach. Intell., Vol. 45, No. 7, pp. 9055–9071, Jul. 2023.

[https://doi.org/10.1109/TPAMI.2022.3225816]

-

M. Yim and J.-C. Chiang, “Mamba-PCGC: Mamba-based point cloud geometry compression,” Proc. IEEE Int. Conf. Image Process. (ICIP), Abu Dhabi, United Arab Emirates, pp. 3554–3560, Oct. 2024.

[https://doi.org/10.1109/ICIP51287.2024.10647269]

-

L. Xie, W. Gao, H. Zheng, and G. Li, “SPCGC: Scalable point cloud geometry compression for machine vision,” Proc. IEEE Int. Conf. Robot. Autom. (ICRA), Yokohama, Japan, pp. 17272–17278, May 2024.

[https://doi.org/10.1109/ICRA57147.2024.10610894]

-

J. Prazeres, R. Rodrigues, M. Pereira, and A. M. G. Pinheiro, “Performance analysis of deep learning-based lossy point cloud geometry compression coding solutions,” IEEE Access, vol. 13, pp. 76000–76015, Apr. 2025.

[https://doi.org/10.1109/ACCESS.2025.3561895]

- M. Preda and G. Bregeon, “Consolidated report on AI-based point cloud compression results,” in ISO/IEC JTC1/SC29/WG7 MPEG input document m69550, Kemer, Nov. 2024.

-

J. Wang, R. Xue, J. Li, D. Ding, Y. Lin, and Z. Ma, “A versatile point cloud compressor using universal multiscale conditional coding – Part I: Geometry,” IEEE Trans. Pattern Anal. Mach. Intell., Vol. 47, No. 1, pp. 269–287, Jan. 2025.

[https://doi.org/10.1109/TPAMI.2024.3462938]

-

J. Wang, R. Xue, J. Li, D. Ding, Y. Lin, and Z. Ma, “A versatile point cloud compressor using universal multiscale conditional coding – Part II: Attribute,” IEEE Trans. Pattern Anal. Mach. Intell., Vol. 47, No. 1, pp. 252–268, Jan. 2025.

[https://doi.org/10.1109/TPAMI.2024.3462945]

- M. A. Lodhi, J. Pang, J. Ahn, and D. Tian, “[AI-GC][CfP-response] UniFHiD Part 1: InterDigital’s answer to MPEG AI-PCC for Track 1 Geometry-only,” in ISO/IEC JTC1/SC29/WG7 MPEG input document m70282, Kemer, Turkey, Nov. 2024.

- MPEG 3D Graphics Coding and Haptics Coding, “Working draft of AI-based point cloud coding,” in ISO/IEC JTC1/SC29/WG7 MPEG output document N01257, July 2025.

- MPEG 3D Graphics Coding and Haptics Coding, “TMAP v3 for AI-based point cloud coding,” in ISO/IEC JTC1/SC29/WG7 MPEG output document N01258, July 2025.

-

B. Graham, “Sparse 3D convolutional neural networks,” in Proc. Brit. Mach. Vis. Conf. (BMVC), Swansea, U.K., pp. 150.1–150.9, Sep. 2015.

[https://doi.org/10.5244/C.29.150]

-

C. Choy, J. Gwak, and S. Savarese, “4D spatio-temporal convnets: Minkowski convolutional neural networks,” in Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR), Long Beach, CA, USA, pp. 3075–3084, Jun. 2019.

[https://doi.org/10.1109/CVPR.2019.00319]

- B. Liu, Y. Ma, L. Li, and D. Liu, “Report on probability estimation,” in ISO/IEC JTC1/SC29/WG7 MPEG input document m72144, Online, Apr. 2025.

- MPEG 3D Graphics Coding and Haptics Coding, “CTC on AI-based point cloud coding,” in ISO/IEC JTC1/SC29/WG7 MPEG output document N01256, July 2025.

-

A. Maggiordomo, F. Ponchio, P. Cignoni, and M. Tarini, “Real-world textured things: A repository of textured models generated with modern photo-reconstruction tools,” Comput. Aided Geometric Des., Vol. 83, No. 101943, Nov. 2020.

[https://doi.org/10.1016/j.cagd.2020.101943]

- Culture 3D Clouds, http://c3dc.fr, (accessed Oct. 14, 2025).

- 2024 : Bachelor of Science in Electronic Engineering, Kyung Hee University

- 2024 ~ Present : Master’s Course of Electronics and Information Convergence Engineering, Kyung Hee University

- ORCID : https://orcid.org/0009-0006-2150-4488

- Research interests : Point Cloud Compression, Image Processing, Multimedia Systems

- 2024 : Bachelor of Electronic Engineering and Astronomy & Spase Science, Kyung Hee University

- 2024 ~ Present : Master’s Course of Electronics and Information Convergence Engineering, Kyung Hee University

- ORCID : https://orcid.org/0009-0003-8085-1170

- Research interests : Point Cloud Compression, Image Processing, Multimedia Systems

- 2017 : Bachelor of Science in Electronic Engineering, Kyung Hee University

- 2019 : Master of Science in Electrical and Electronic Engineering, Kyung Hee University

- 2022 : Ph.D. in Electrical and Electronic Engineering, Kyung Hee University

- 2022 ~ 2023 : TTA Senior Engineer

- 2024 ~ Present : ETRI Research Engineer

- ORCID : https://orcid.org/0000-0002-0287-9640

- Research interests : Point Cloud Compression, Deep Learning, Image Processing, Multimedia Systems

- 1989 : Bachelor of Science in Electronic Engineering, Hanyang University

- 1996 : Ph.D. in Electrical and Electronic Engineering, University of Newcastle upon Tyne, U.K.

- 1996 ~ 1997 : Research Fellow, University of Sheffield, U.K.

- 1997 ~ 2006 : Team Leader, Interactive Media Research Team, Electronics and Telecommunications Research Institute (ETRI), Korea

- 2006 ~ Present : Professor, College of Electronics and Information, Kyung Hee University, Korea

- ORCID : https://orcid.org/0000-0003-1553-936X

- Research interests : Digital Broadcasting, Image Processing, Multimedia Communication, Interactive Media Systems